Why Healthcare AI Governance Isn't What You Think It Is

AI governance isn’t nested boxes or monthly committees. It’s architecture. Under HIPAA and GDPR, your “data steward” often can’t even review the data. Real governance is built into the plumbing, validation, lineage, and security enforced automatically. Otherwise, it’s theater.

On a Tuesday morning in March, a cardiologist at a major teaching hospital receives an alert on her phone. An AI system has flagged one of her patients, a sixty-four-year-old man with atrial fibrillation, as high-risk for stroke within thirty days. The alert recommends escalating anticoagulation. She stares at it, then closes it and moves on.

Not because the alert is wrong. But because she has no idea where the number came from.

Is it based on yesterday's labs or last month's? Did the system account for recent medication changes? Is the algorithm validated for patients like hers? She cannot answer these questions, so she cannot trust the alert. She ignores it and relies on data she can see herself.

Somewhere in the hospital's IT department, someone is frustrated. They've invested in this AI system, integrated it with the EHR, set up the data pipelines. And clinicians aren't using it. The problem, they assume, is resistance to change. Or maybe they need a "governance framework."

What they don't realize is that the real problem has nothing to do with the AI. It's whether anyone can answer a simple question: where did this number come from, and can I trust it?

A tax accountant faces a similar problem on the same Tuesday morning. A client calls: "I got a 1099 from my brokerage, but it shows a different amount than my account. Which number do I use?"

The accountant doesn't hesitate. "Send me the 1099. That's what the IRS will expect."

When the accountant prepares the tax return, every number is traceable. The W-2 comes from the employer's W-2. The mortgage interest comes from the bank's 1098. If an IRS auditor asks where a number came from, the accountant points to a source document.

Then came software. Tax preparation moved to QuickBooks and specialized platforms. And something interesting happened: the software didn't just speed things up. It made governance possible.

You cannot enter a W-2 as free text. The field only accepts numbers in specific formats. You cannot skip a dependent's Social Security number. The software requires it. You cannot claim a business expense without categorizing it. Every data element has a type, a format, allowed values. The software validates these automatically.

And because everything is digital, there's an audit trail. Every change is timestamped and linked to a source. If the IRS audits you seven years later, the accountant can reconstruct the entire filing, source by source.

The accountant's judgment still matters. But the friction of managing data disappeared. The system enforces what can and cannot be entered. The system maintains an audit trail. The system knows that W-2 income and 1099 income are different types flowing to different places on the return.

This is governance. Not as policy. But as architecture. Baked in.

Now consider a surgeon on that same Tuesday. She has finished a spinal fusion. A patient with severe back pain and disc herniation underwent surgery. Now she documents what happened.

She dictates: "Patient is a fifty-two-year-old male with L4-L5 disc herniation. Underwent L4-L5 microdiscectomy via midline approach. Intraoperative neuromonitoring normal throughout. Minimal blood loss. No complications."

This note will follow the patient for decades. If complications develop, it explains what was done and why. If the patient moves hospitals, it travels with him. If there's ever a legal question, it becomes evidence.

The surgeon's note works because of the same principles that make the accountant's return work. Terminology is standardized. Every surgeon knows what "L4-L5 microdiscectomy via midline approach" means. Data comes from observed sources: the surgical team was there; neuromonitoring ran in real-time. Documentation happens while memory is fresh. The note is reviewed and signed by the attending. The surgeon's medical license and reputation hinge on accuracy.

Now imagine the surgeon's world goes digital. A hospital implements AI that synthesizes data from multiple sources: EHR data, imaging, lab results, genetics, wearables, insurance claims, social determinants. The AI generates risk assessments, flags drug interactions, sends alerts.

The surgeon receives: "Patient's potassium is elevated. Consider repletion."

She stares at it. When was this drawn? By which lab? Is the algorithm validated for this patient? Unlike her medical training or the accountant's software, there's no single source document, no professional standard, no clear accountability.

So she ignores the alert and relies on data she understands.

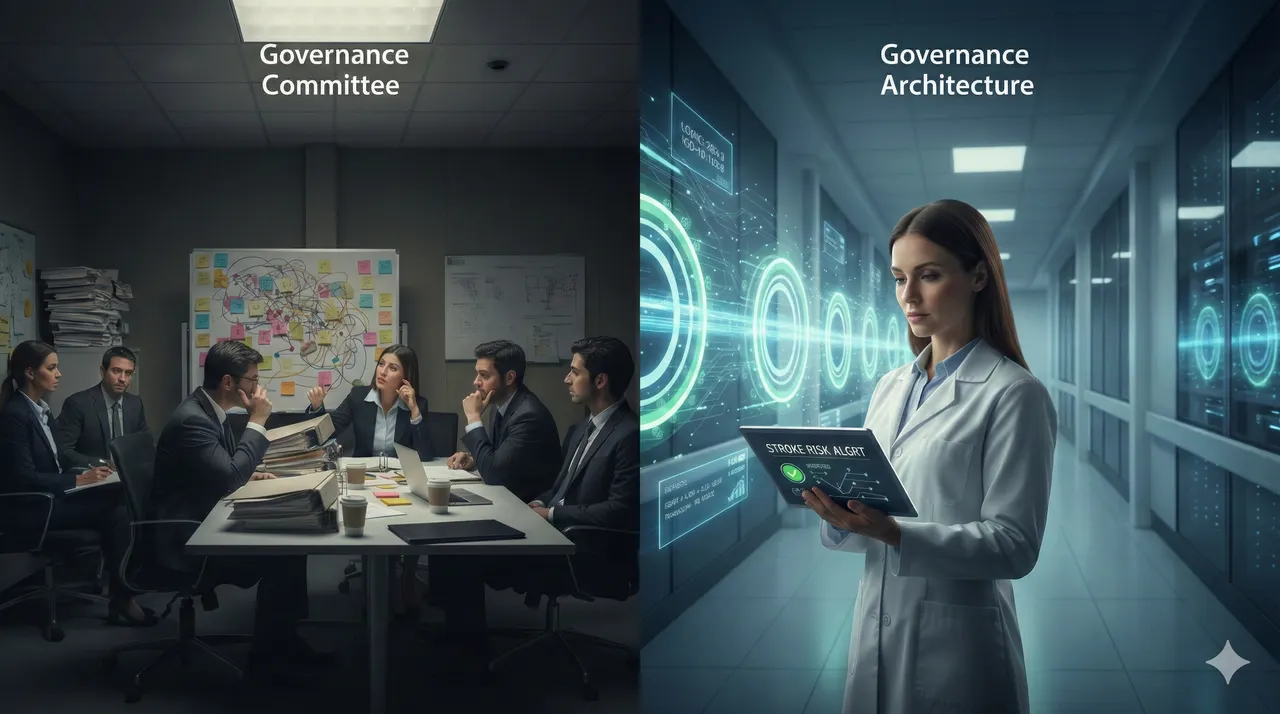

The IT director and surgeon meet about why the AI isn't being used. The IT director says they need better "governance." The surgeon agrees they need governance. But they're talking about completely different things.

The IT director thinks governance means appointing a data steward, forming a committee, writing policies saying "all patient data must be validated."

The surgeon thinks governance means someone takes responsibility for whether that potassium level is actually accurate. Someone who can answer: where did this come from, and can I trust it?

These are not the same problem. One is administrative. One is clinical. One can be solved by hiring a person. The other requires redesigning the entire data pipeline.

What Governance Actually Means

In the forty-seven LinkedIn articles about AI governance that showed up in my feed this week, governance looks like nested boxes. There's a layer for "foundational data management." Above that, "data quality and observability." Then "policies and standards," "ownership and accountability," "data products and consumption." Finally, "business decisions."

Each layer is color-coded. Each contains sub-boxes: "master data management," "metadata standards," "access controls," "data stewards," "governance council." Professional. Comprehensive. Almost entirely useless in practice.

A hospital IT director showed one of these frameworks to clinicians. A surgeon asked: "Does this tell me whether the potassium level in my alert is from today or last week?" The answer was no. The framework has a box labeled "data quality and observability," but it doesn't say how you actually observe data quality in real-time or what happens when something goes wrong.

A chief medical officer told his team to implement a data governance committee. Twelve people meet monthly. They've created a charter, defined roles, documented policies. A year later, the EHR still sends alerts based on data nobody can verify. The committee solved nothing. But leadership feels like governance is happening.

This is governance theater.

The frameworks treat governance as something you add on top of existing systems. Build your platform, then add governance. Implement your AI, then add oversight. The solution to bad data is a steward who reviews it. The solution to unclear accountability is a council. The solution to governance problems is more governance: more policies, more committees, more documentation.

But here's what happens: the governance layer becomes a bottleneck. The steward can't possibly review all the data. In fact, under HIPAA and GDPR, the steward often isn't legally allowed to review clinical patient data without a legitimate clinical need. The committee can't meet often enough to address real-time problems. Policies get written and ignored. And the underlying problem - the broken data pipeline - never gets fixed.

The accountant's tax software and the surgeon's professional training work on a different principle entirely. Governance isn't added on top. It's embedded from the start.

In tax software, you cannot violate the rules because the software won't let you. You cannot enter an invalid date format. You cannot skip a required field. You cannot categorize income incorrectly. The software enforces governance through architecture.

In medical training, governance is embedded in professional standards built over decades. A surgeon learns to document a specific way because that's how surgeons document. Terminology is standardized by the surgical community. Accountability is personal because the surgeon's license and reputation are on the line. Governance works because it's embedded in professional culture and regulation, not because someone appointed a committee.

Healthcare AI needs to work the same way. Not by adding oversight layers. But by designing the data pipeline so governance happens as data flows through the system.

This is where the framework boxes completely miss the point. They mistake administration for architecture.

Governance as Architecture

When a patient's lab result arrives at a well-designed healthcare data platform, it doesn't just get stored as a number. It arrives as a structured message with mandatory fields: patient identifier, test code, result value, units, reference range, timestamps, source laboratory, quality flags.

The system validates every field against a standard. The patient identifier must match a known patient. The test code must be a valid LOINC code. Units must be present and consistent. The timestamp must indicate clinically current data. If anything fails, the system flags it before data is ever loaded. No steward reviews it. The rules are built in.

Once validated, data is encrypted at rest, access is controlled by patient and data type, and every transformation is logged. A cardiologist sees only her own patients. She cannot see psychiatric history without documented clinical need. The data model itself enforces semantics; a "potassium level" isn't just a number but a measurement contextualized by kidney function, medications, and recent trends.

When the AI pulls data, it's already been validated, contextualized, and secured. When something goes wrong later, you can audit the entire chain: when the test was drawn, which lab ran it, which validations it passed, who accessed it, what the AI did with it.

This is governance embedded in architecture. Data modeling enforces semantics. Master data management reconciles patients across systems. ETL processes enforce transformation rules before data reaches the AI. Data lineage is automatic (when designed well; few hospitals achieve this yet). Quality monitoring is continuous. Security is architectural.

When governance is built this way, the AI system receives trustworthy data. The clinician receives alerts backed by data she can verify. Accountability shifts from "who made this decision?" to "what was the data quality when the decision was made?"

This is the difference between governance as policy and governance as architecture. One requires people to follow rules. The other makes certain kinds of rule-breaking impossible.

Why This Matters Right Now

Most AI systems implemented in healthcare sit unused. Clinicians ignore alerts. Not because they distrust AI, but because they cannot verify the data behind it.

But there's a more serious problem: liability and regulatory exposure.

In 2023 and 2024, the FDA released guidance on clinical AI validation and real-world performance monitoring. CMS began auditing hospitals on how they oversee algorithmic decision-making. The HHS Office for Civil Rights has signaled that healthcare organizations are liable for harm caused by AI systems that rely on unvalidated or unauditable data. Regulators are moving from "please think about governance" to "prove your governance."

When a patient receives an AI alert and is harmed, a lawyer will ask: "Where did the data come from? Who validated it? Can you prove it was accurate when the AI consumed it? Show me the audit trail."

If the answer is "we have a governance committee that meets monthly," the organization loses.

If the answer is "the data platform automatically validates all data before the AI ever sees it, and here's the complete lineage," the organization has a defense.

The organizations that survive the regulatory wave ahead aren't the ones with impressive frameworks or dedicated committees. They're the ones with data platforms designed so governance happens automatically, continuously, auditably. Everything else is paperwork that won't hold up in court.

The Path Forward

On that Tuesday morning in March, the cardiologist closed an alert because she couldn't trust the data behind it. In another hospital, a surgeon reviewed an AI alert carefully because she could trace every data point back to its source. She knew which labs were recent, which imaging current, which genetic markers validated. She made a different clinical decision. The patient had a better outcome.

The difference between these hospitals was not the AI. It was the platform.

One had governance theater: committees, policies, stewards who couldn't legally review the data. The other had governance architecture: a platform where validation, security, lineage, and quality monitoring happened automatically.

This is the insight that LinkedIn's governance frameworks miss. Governance is not something you add after you've built the system. It's not something a committee oversees. It's not a layer of management on top of operations.

Governance is the system itself.

The accountant's tax software works because governance is embedded in validation. The surgeon's professional training works because governance is embedded in standards and accountability. Healthcare AI will work the same way, but only if the data platform is designed from the start with governance as architecture.

This requires different thinking about healthcare technology. Not "build the AI first, add governance later." But "design the platform so governance happens automatically, then build the AI on top."

It requires investment in unglamorous parts: data modeling, master data management, ETL pipelines, data quality monitoring, audit logging, encryption, access controls. These don't make headlines. They don't attract venture capital. But they're the difference between an AI system clinicians trust and use, versus one that sits ignored. Between a platform that can defend itself in court and one that cannot.

The cardiologist who closed that stroke alert will eventually receive an alert from a system backed by a well-designed platform. She will verify the data. She will trust the recommendation. She will act on it. The patient will benefit.

That's when healthcare AI governance moves beyond frameworks and committees. That's when governance becomes what it needs to be: invisible, automatic, and built into every byte of data flowing through the system.