Understanding FDA's 2026 Clinical Decision Support Guidance: It's Not Deregulation

The FDA’s January 2026 CDS guidance isn’t deregulation, it’s smart, risk-based clarity. AI tools that support physician judgment without black-box automation can move faster. High-risk diagnostic systems remain regulated. That’s not stepping back, it’s focusing oversight where it truly matters.

What can we learn from the latest recommendations?

We've all heard that the FDA is easing regulation on AI in healthcare. That's not what's happening. The final Clinical Decision Support Software guidance published in January 2026 doesn't deregulate AI, it draws a bright line between what requires oversight and what doesn't. And that line is drawn based on risk, not convenience.

What the FDA has done is provide much-needed clarity on which clinical decision support software functions fall outside the definition of a medical device, and critically, which ones remain firmly under regulatory oversight.

The Four Criteria Framework

Under the new regulations, Clinical Decision Support software intended for healthcare professionals is excluded from device regulation only if it meets ALL four criteria:

- It does NOT acquire, process, or analyze medical images, signals from diagnostic devices, or patterns from signal acquisition systems

- It DOES display, analyze, or print medical information about patients or other medical information

- It DOES provide recommendations (not directives) to support physician decision-making

- It DOES enable the physician to independently review the basis for recommendations, not rely primarily on them

Miss even one criterion, and you're looking at a regulated medical device.

What This Really Means

The guidance makes clear distinctions that many in the industry may find uncomfortable:

Non-Device CDS (examples that meet all four criteria):

- Drug-drug interaction alerts based on patient records

- Treatment option lists derived from clinical guidelines

- Reminder systems for preventive care

- Discharge planning assistance tools

Regulated Device Software (examples that fail one or more criteria):

- Software analyzing CT images to detect abnormalities

- Algorithms processing ECG waveforms to diagnose arrhythmias

- Tools analyzing genetic sequences to identify mutations

- Systems that provide specific diagnostic outputs in time-critical situations

The Time-Critical Distinction

One of the most significant aspects of the guidance is the emphasis on time-criticality. The FDA explicitly states that software intended for critical, time-sensitive tasks cannot meet Criterion 4 because physicians won't have sufficient time to independently review the basis for recommendations.

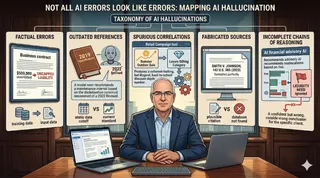

This is not arbitrary. It reflects a deep understanding of automation bias, the human tendency to over-rely on automated recommendations, especially under time pressure.

The Independent Review Requirement

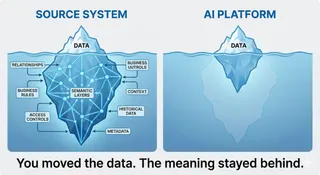

Criterion 4 deserves special attention because it reveals something fundamental: the FDA understands that AI solutions cannot be black boxes. Medical decision-making is a process that takes time, often requires team effort, and sometimes demands repetition. It's not a simple riddle where the answer alone matters.

The journey is as important as the final result. Physicians learn to discuss options, to quote studies, to defend their reasoning. They justify why a specific test is needed and explain what the next steps would be if that test comes back positive or negative. This deliberative process is core to good medicine, and the FDA recognizes that AI must support, not replace, this process.

For software to qualify as non-device CDS, it must enable physicians to independently review and understand:

- The purpose and intended use: This isn't just a label, it's about clearly defining which clinical scenarios the software addresses, which patient populations it serves, and what role it plays in the clinical workflow. The physician needs to understand the boundaries of where this tool should and shouldn't be applied.

- Input data requirements and quality standards: The physician must know exactly what information the algorithm is considering, how that data should be obtained, why it's relevant, and what quality standards apply. Missing or poor-quality inputs can lead to unreliable outputs, and the physician needs to recognize when data limitations might affect the recommendation.

- A plain-language description of the underlying algorithm: This means explaining the logic and methodology in terms the intended user can understand. Was it based on meta-analysis of clinical trials? Expert consensus? Statistical modeling? Machine learning? The physician should be able to grasp the fundamental approach, even if they can't reproduce the code. This transparency allows them to assess whether the methodology is sound and appropriate for their patient.

- The data used to develop and validate the algorithm: Physicians need to know if the training data represents their patient population. Were relevant subgroups included? What were the demographics, disease severity, clinical settings? Were best practices followed, such as using independent datasets for development and validation? Without this information, a physician cannot assess whether the algorithm's performance in development will translate to their specific patients.

- Clinical study results demonstrating performance and limitations: Real-world validation matters. What was the algorithm's performance in clinical studies? In which subpopulations did it perform well or poorly? Where is there high variability or uncertainty? Understanding these limitations helps physicians know when to trust the recommendation and when to be cautious. It's the difference between blind reliance and informed judgment.

- Patient-specific information relevant to the recommendation: The software must show its work for this individual patient. Which of this patient's characteristics influenced the recommendation? What data points were used? Were any expected inputs missing or of questionable quality? This context allows the physician to assess whether the recommendation makes sense for this particular person, not just patients in general.

This is not a checkbox exercise. The FDA expects usability testing to confirm that physicians can actually perform this independent review. The requirement reflects a deep understanding that reaching a medical decision is inherently a transparent, reasoned process, not a mysterious output from an opaque system.

The guidance demonstrates sophisticated understanding of several critical issues:

- Pattern vs. Point Measurements: The distinction between discrete measurements (medical information) and continuous/sequential sampling (patterns/signals) shows careful thought about clinical reality.

- Automation Bias: Explicit acknowledgment that time pressure increases reliance on automated recommendations, making independent review impossible.

- Transparency Requirements: The insistence that algorithms be explainable in plain language, even proprietary ones, prioritizes patient safety over commercial interests.

- Process Over Output: Recognition that medical decision-making is fundamentally about reasoning and justification, not just producing an answer.

What This Means Going Forward

This guidance should not be read as the FDA "getting out of the way" of AI innovation. Rather, it's the FDA saying: "We understand that not all decision support software poses the same risk, and we're focusing our regulatory resources where they matter most."

Software that:

- Analyzes images, waveforms, or genomic patterns

- Provides diagnostic conclusions rather than options

- Operates in time-critical contexts

- Cannot enable independent review of its reasoning

. . . will remain under full regulatory oversight. And rightfully so.

A Call for Humility

Perhaps the most important lesson from this guidance is one of humility. The FDA acknowledges the complexity of modern healthcare IT while insisting on transparency and independent oversight.

Those of us building healthcare AI should embrace the same humility. The technology changes hourly. What worked yesterday may not work tomorrow. And the consequences of getting it wrong in healthcare aren't measured in dollars, they're measured in patient outcomes.

The guidance isn't easing regulation on AI in healthcare. It's clarifying which AI tools need regulation and why. That's not deregulation, it's intelligent risk management.

And in healthcare, that distinction matters.

Based on the Final Guidance Document from January 29th 2026 https://www.fda.gov/regulatory-information/search-fda-guidance-documents/clinical-decision-support-software