There Is No Certification for This

AI compresses knowledge. It does not compress experience. The junior PM in your next interview has access to the same tools you do. What separates you now is judgment. There is no course for that. There never was.

My previous piece told companies what to look for when hiring a product leader for healthcare AI. The short version: stop requiring fourteen years of experience in a field that is three years old, and stop filtering for knowledge that AI can now compress in an afternoon. Hire for judgment, domain depth, and the ability to run an end-to-end product cycle with a team of agents and junior PMs.

Several people responded with a version of the same question: how do I build those skills?

The honest answer is that most of them cannot be built in a training course. Not because the courses are bad. Because the skills in question are learned through a specific mechanism: doing consequential work, being wrong about something that mattered, and adjusting based on what you saw. You cannot simulate that. You can only accumulate it.

AI has made the junior PM faster and more capable than any previous generation. It has also raised the stakes for what a senior PM needs to provide. The knowledge gap between a new PM with good AI tools and a twenty-year veteran is narrower than it has ever been. The judgment gap is not. And in the current market, that distinction is the entire game.

Here is what I mean by that, and why it matters.

Pattern Recognition Is Not Learnable in Three Weeks

I have been in enterprise software long enough to have watched several technology waves break against the same rocks. The SOA promise. The Big Data gold rush. The blockchain moment. The IoT and Industry 4.0 announcements that are still, in many organizations, half-delivered. Each one followed the same arc: a genuine underlying capability, a period of overclaiming, a wave of expensive projects that failed for reasons that had nothing to do with the technology, and a quieter consolidation where the organizations that had built something real emerged with an advantage.

The people who navigated those cycles well were not the ones who had read the most papers. They were the ones who could say, early enough to matter: I have seen this pattern before. Here is where it breaks. Here is what the successful version looks like.

That sentence cannot be acquired in a certification program. It is built through repeated exposure to failure, at enough depth to understand why, over enough time to recognize the early signals the next time they appear. A physician colleague once told me that the difference between a resident and an attending is not knowledge. It is the ability to be calm when something unexpected happens, because you have seen enough unexpected things to know that most of them are navigable. The senior PM equivalent is that same quality: the clarity to think well when the project is off track and the room is looking to you for a call.

My first manager at Microsoft said something I have carried ever since: "I have no problem if you make a mistake, as long as you do not make it again." At the time it sounded like a reasonable management policy. Twenty years later it reads like a precise description of how that stability is actually built. The mistake is the lesson. The repetition is the failure. And the only way to accumulate enough lessons to become truly useful in complex, high-stakes environments is to have been in enough of them, made enough mistakes, and paid enough attention to understand why.

In healthcare AI, most of what is failing right now is failing because of governance gaps, change management problems, and data infrastructure that goes back decades. The PM who recognizes those patterns early, because they have seen versions of them before, is providing something no tool can replicate. Domain depth is what determines whether you can also see what the AI is about to get wrong before it does.

Domain Depth Makes AI Trustworthy, Not Generic

When a PM without domain expertise uses AI to analyze a complex clinical question, the output is fluent and plausible. It is also frequently wrong in ways that are invisible unless you know the domain well enough to interrogate the assumptions.

I write about healthcare AI from a specific vantage point: I am a physician and a product manager simultaneously, and those two things are not separable in the way I do this work. When I read an AI-generated analysis of a clinical workflow, I am reading it the way a clinician reads it, which means I notice when the recommended alert frequency would produce the exact alert fatigue pattern that caused the last three deployments to fail, or when the proposed integration assumes a FHIR endpoint that community clinics in the relevant geography do not have.

That is a judgment advantage: knowing which parts of the AI output to trust and which to interrogate. A PM who has spent years shipping products that reach real clinical workflows develops a specific kind of intuition about where things break. They have seen the nurse abandon the tool at the end of a twelve-hour shift. They have watched the physician override the alert within a week of deployment because the false positive rate was too high. They carry those failures as a filter.

Here is the more subtle point: domain expertise does not just improve your evaluation of AI outputs. It changes the questions you ask. A PM without clinical depth asks the AI whether the product concept is feasible. A PM with clinical depth asks whether it will survive contact with reality at 2 AM in an understaffed unit. Those are not the same question, and only one of them produces a useful answer.

Product Strategy Is Still Entirely Human

AI can support strategy in important ways: it synthesizes competitors, generates market estimates, and pressure-tests assumptions. What it cannot do is develop a differentiated point of view on what to build, why this organization is positioned to win, and what to decline building despite pressure to do otherwise. That requires understanding your organization's actual constraints, your team's real capabilities, and the specific gap in the market that your history and relationships position you to fill. That synthesis is not a reasoning task. It is a judgment task, and it requires the kind of situated knowledge that only comes from being inside an organization long enough to understand what is actually possible versus what merely sounds strategic.

The PMs who create the most value are the ones who invest here precisely because it cannot be automated. Strategy requires conviction. Conviction requires judgment. Judgment requires experience.

Reading the Room Is a Product Skill

The hardest problems I have faced as a product manager were never technical. They were organizational. The stakeholder who agreed in the meeting and then blocked the decision at the implementation stage. The UX designer who was convinced he was an artist and delivered a futuristic interface that no one, including the people who asked for it, could actually navigate. The engineering lead who was building the right thing technically but the wrong thing for the customer, and who needed to hear that in a way that preserved the relationship and the timeline.

None of those situations have a framework. You learn to navigate them the same way a physician learns to deliver difficult news: by doing it badly first, reflecting on what you missed, and slowly developing the ability to read what is actually happening beneath the surface of a conversation.

The most important clinical skill is not diagnosis. It is hearing what the patient is not saying, noticing the detail mentioned almost as an aside that turns out to be the whole problem. That skill transfers directly into product work. The stakeholder who says they support the roadmap but keeps introducing small delays is not a process problem. They are a communication problem, and they require the same quality of attention as the patient who mentions the symptom you almost missed.

It is built through hundreds of hard conversations, enough of which went wrong that you eventually learn to pay closer attention. Strategy tells you where to go. Reading the room is how you get the organization to come with you.

You Are Actually Building Two Products, and Trust Is What Connects Them

When you build a healthcare AI product, you are not building one product. You are building two in parallel, and the AI PM's specific job is to be the architect of trust between them.

The first is the machine product: what the AI agent sees, processes, and acts on. Its data inputs, its reasoning layer, its API surface, its failure modes, its escalation logic, how it behaves when the model drifts, what it does at 3 AM when the hospital network is slow. This product has no user interface. It does not care whether the nurse is tired or the attending is skeptical. It needs to be designed with the rigor you would apply to infrastructure, because that is what it is.

The second is the human product: what the clinician sees, how the recommendation lands in a workflow already at capacity, how much explanation accompanies the alert, what happens when the physician disagrees with the AI and needs to override it, and how that override is recorded for a legal audit trail that may be reviewed three years from now. This product is almost entirely about trust. Not trust as a feature. Trust as a continuous, fragile relationship between a system and a person who is responsible for another person's life.

Most teams build the machine product first because engineers understand it. The human product gets added later, by which point the architectural decisions that shape the human experience are already locked. And trust, once broken in a clinical environment, is extraordinarily difficult to rebuild.

This is the PM's central responsibility in the age of agentic AI: to hold both tracks simultaneously, to understand what each one requires, and to design the interface between them with the same care you bring to either side. Trust is the most critical element in any agentic system, and it lives precisely in that interface. It is earned when the machine is reliable and the human experience makes that reliability visible. It collapses when one side is designed without the other in mind.

No course teaches you to hold both tracks simultaneously under pressure. You build that capacity by watching trust fail in real products and understanding exactly where the design broke down.

High Standards Are a Practice, Not a Setting

The last skill is the simplest to describe and the hardest to maintain: knowing what great looks like, and refusing to ship anything that falls short.

I ran a nine-agent AI design thinking workshop last year on a healthcare readmission problem. Under ten dollars in API costs, three hours of work, and the output reframed the product direction for the following week. But the output was only that useful because I knew what I was looking for and rejected several iterations that were technically coherent but clinically insufficient. The community health worker agent abandoned the prototype during testing, because the prototype assumed broadband and English and a smartphone, and none of those assumptions held for the patients with the highest readmission risk. A PM without clinical depth would likely have shipped that prototype.

Holding the standard when the pressure is to ship is the hardest part of the job at any level. AI amplifies this. It produces plausible output faster than any previous tool, which means the pressure to accept the first reasonable answer is higher than it has ever been.

The PMs who differentiate in the age of AI are the ones who use AI to produce faster, better first drafts, and then apply the same uncompromising standard they would have applied before the tools existed. That combination, speed plus judgment, is what no certification produces and no junior PM with good tools automatically has.

What You Actually Build Toward

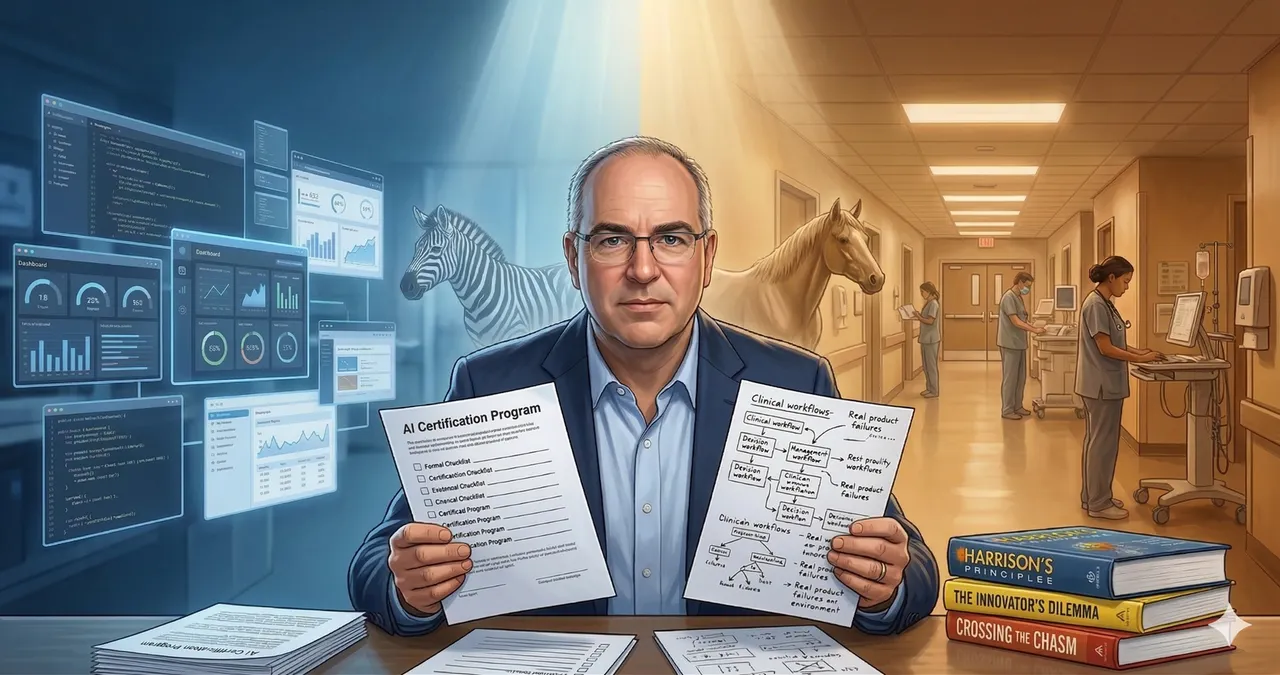

The first article in this series told companies to stop looking for zebras. This one tells PMs something different: the skills that make you the horse are built over time, through work, through failure, and through the discipline to keep caring about quality when every incentive is to move faster.

There is a third layer beyond what this article covers. Accountability, equity, and the ethical architecture of clinical AI are not learnable in a course either. Accountability means knowing what to do when the system and the physician disagree and someone has to be responsible for what happens next. Equity means understanding that a model performing at 94% accuracy can simultaneously be failing a specific population entirely, and that no launch checklist catches this without someone with domain depth and pattern recognition looking for it. That conversation belongs in its own article.

AI will not replace the PM who has the skills described here. It will make that PM dramatically more capable, because every hour that used to go to artifact production is now available for the work that actually requires judgment. But the judgment has to be there first.

There is no certification for that. There never was.

This article draws on experience in healthcare AI, but the principles it describes are not specific to healthcare. The judgment gap, the dual-product challenge, the difficulty of reading organizational dynamics, the discipline of high standards under pressure: these apply to any PM building AI products, in any industry. Healthcare sharpens the stakes. It does not change the fundamentals.