The Question Is No Longer "Can We Build It?"

The bottleneck in tech is no longer writing code, it’s deciding what to build. As AI collapses development time, value shifts upstream to product judgment. In healthcare especially, models work, but projects fail without real product thinking. The new question isn’t can we build it, but should we.

Why Product Management and Product Research Are the Most Critical Skills in the Age of AI

Something profound has shifted in how technology companies operate. In the summer of 2025, Andrew Ng, the Stanford professor, former Google Brain scientist, and one of the most influential voices in artificial intelligence, made an observation on the No Priors podcast that landed like a thunderclap across the startup world. The biggest constraint on his teams, he said, was no longer writing code. It was deciding what to build.

Projects that once required six engineers and three months, Ng explained, could now be completed by a couple of people over a weekend. Prototypes that took weeks were materializing in a single day. And once the build cycle compressed that dramatically, every other part of the product development loop, gathering feedback, talking to users, making prioritization calls, suddenly felt agonizingly slow. His teams, he admitted, were increasingly relying on gut instinct to keep pace.

Six months later, that observation has only become more urgent. With the release of models like Opus 4.6 and GPT-5.3 Codex, we have crossed into a new era of software development. These are not incremental improvements. They are systems capable of architecting, writing, debugging, and deploying production-quality code with minimal human intervention. A competent engineer with the right AI tooling can now prototype in hours what a full team would have labored over for a quarter. The technical barrier to building software has, for most practical purposes, collapsed.

And that collapse changes everything about where value is created.

The Inversion

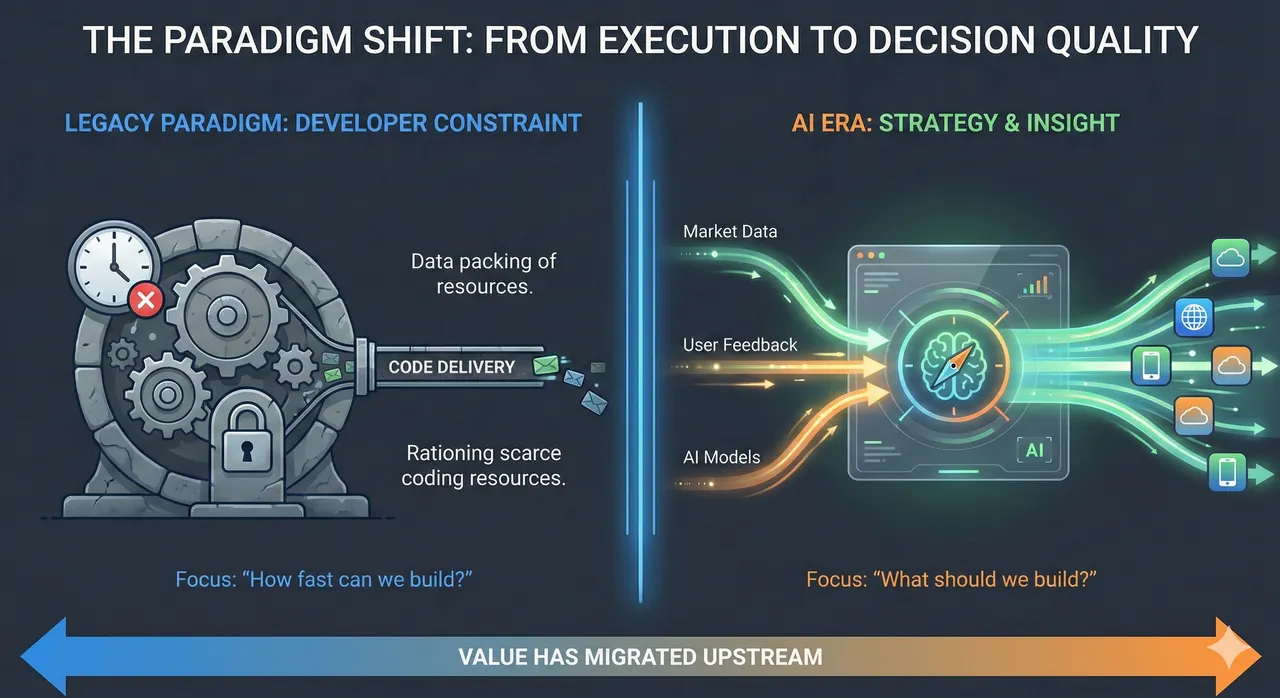

For decades, the defining constraint in technology was engineering capacity. Roadmaps existed because teams couldn't build everything at once. Prioritization frameworks, RICE scores, weighted scoring models, opportunity sizing, were invented to ration a scarce resource: developer time. Product managers spent enormous energy justifying why this feature deserved the next available sprint.

That scarcity is evaporating. When building is cheap and fast, the bottleneck migrates upstream, to the quality of the decisions that determine what gets built and why. The old question was: can we ship this by Q3? The new question is: should we ship this at all?

This is not a theoretical shift. It is already reshaping how the best teams operate. The competitive advantage no longer belongs to the organization that can build fastest. It belongs to the organization that can learn fastest, that can identify the right problem, validate it with real users, and iterate on the solution before a competitor even finishes their first prototype.

Product Management as the New Core Competency

A recent Harvard Business Review article by Stanford researchers Amanda Pratt and Melissa Valentine puts a fine point on this. They argue that the real payoff from generative AI comes not from better prompting skills, but from the ability to define valuable problems, evaluate possible solutions, experiment rapidly, and integrate new practices into day-to-day work. These, they note, are the core disciplines of product management.

Their research, drawn from 18 months of studying AI adoption at Google and observations of nearly 2,000 professionals across industries, found a consistent pattern. The employees who succeeded with AI were not the ones with the best technical skills. They were the ones who approached their work with a product mindset, starting with the problem, not the tool.

This finding is striking because it inverts the conventional wisdom about AI readiness. Most organizations have focused their AI training on technical fluency: how to write prompts, how to use specific tools, how to understand model capabilities. That training matters, but it is insufficient. Without a clear sense of which problems are worth solving, employees default to low-impact uses, drafting simple emails, generating generic summaries, and quickly conclude that AI isn't worth the effort.

The Pratt and Valentine research describes a telling example. A manager who initially used AI to generate quick summaries found the outputs generic and the process no faster than her manual workflow. She was ready to abandon the technology entirely. What turned it around was not a better prompt, it was a sharper problem definition. Once she articulated exactly what "valuable" meant in her context (executive-ready communications that required minimal editing), she was able to design a repeatable AI workflow that saved significant time.

The Rise of Product Research

If product management is the new bottleneck, then product research, the disciplined practice of understanding users, markets, and problems before committing resources, becomes the highest-leverage activity an organization can invest in.

Consider the economics. When building a prototype costs a weekend of effort, the cost of building the wrong thing drops dramatically, but it doesn't disappear. The real cost is opportunity cost: every week your team spends iterating on a feature nobody needs is a week not spent on the feature that would change your trajectory. And because AI makes it possible to pursue more ideas simultaneously, the risk of spreading attention across mediocre bets actually increases without strong product research to guide prioritization.

This is where Ng's point about customer empathy becomes essential. He emphasized that effective product leaders need deep understanding of their customers, not just data dashboards, but the kind of nuanced, almost intuitive grasp of user needs that lets you make confident decisions at the speed the build cycle now demands. Data remains important, but when the iteration cycle compresses from months to days, there isn't always time to wait for statistically significant A/B test results. You need people who have done the upfront work of understanding the problem space deeply enough to make informed bets.

What This Means for Organizations

The implications for how companies staff, structure, and invest are significant.

First, the ratio of product thinkers to builders needs to change. When one engineer with AI assistance can do the work of a small team, organizations don't necessarily need fewer engineers, but they urgently need more people dedicated to discovery, research, and validation. The teams that thrive will be the ones where product managers, designers, and researchers are not outnumbered ten-to-one by developers, but work in close, balanced partnership with them.

Second, experimentation culture becomes non-negotiable. The Pratt and Valentine research found that even employees who believed in AI often struggled to experiment because they lacked time, feared judgment if experiments failed, or worried that AI-assisted success would be seen as "cheating." Leaders must actively dismantle these barriers, modeling experimentation themselves, explicitly protecting time for it, and celebrating learning from failure as much as learning from success.

Third, integration matters more than invention. A brilliant prototype that lives in a standalone chat window is worth nothing if it never connects to the workflows and systems where real work happens. The "last mile" of product development, embedding a solution into the daily operating rhythm of a team, has always been undervalued. In an AI-accelerated world, it becomes the difference between a demo and durable impact.

A Case in Point: Healthcare's Graveyard of Brilliant Models

Nowhere is the gap between can we build it and should we build it more visible than in healthcare.

AI models have been used in medicine for years, and the results, in isolation, are remarkable. The literature is filled with studies showing that AI can detect tumors, predict sepsis, flag drug interactions, and triage patient risk, often matching or exceeding expert physicians. The FDA has authorized roughly 950 AI-enabled medical devices. The models work. The papers get published.

And then, overwhelmingly, nothing happens.

Most healthcare AI proofs of concept fail not because the model underperforms, but because nobody did the product work. Consider sepsis prediction, one of the most studied use cases in clinical AI. Models that identify at-risk patients hours before clinical signs appear have been validated repeatedly. Yet hospital after hospital has struggled to make these tools stick. The reason is rarely accuracy. It is alert fatigue: when a system fires too many false positives into an already overloaded EHR inbox, clinicians learn to ignore it within days. The product question was never "can AI predict sepsis?" It was "can we deliver that prediction in a way that a critical care nurse, managing six patients at 3 AM, will actually trust and act on?"

This is the product management problem in its purest form. Healthcare doesn't lack AI capability. It lacks the disciplined, empathetic product thinking required to translate capability into adoption.

The product manager who truly acts as the "mini CEO" in this space must go far beyond model performance. They need to shadow clinicians during shift changes, understand how physicians interact with the EHR under time pressure, and assess whether the problem their model solves is one clinicians actually experience as painful, or merely one that looks compelling in a conference abstract. They need to navigate FDA clearance pathways, anticipate the cultural resistance that accompanies any new technology in a field where trust is earned slowly, and plan for change management from day one.

The healthcare product manager must answer a question no algorithm can: is this solvable problem actually worth solving for the people who would use the solution every day? Getting to that answer requires knowing your customer first, before writing a single line of code. Healthcare is the sharpest example, but the lesson generalizes across every domain where AI is being deployed.

The Paradox of Abundance

There is a deeper paradox at work here. The easier it becomes to build, the harder it becomes to choose wisely. When technical constraints forced scarcity, they also imposed discipline. You had to prioritize because you couldn't do everything. Now that you theoretically can do everything, the discipline must come from somewhere else, from rigorous product thinking, from genuine understanding of user needs, from the willingness to say "no" to a perfectly buildable feature because it isn't the right thing to build.

This is why the current moment is so consequential. The organizations and leaders who recognize that the bottleneck has moved, who invest in product management, product research, and decision-making infrastructure with the same urgency they once invested in engineering capacity, will define the next era of technology. Those who continue to treat product as a support function to engineering will find themselves building faster and faster in the wrong direction.

The code writes itself now. The question is whether you know what it should do.