The AI Paradox: How Artificial Intelligence Is Eroding Human Skills Across Professions

AI isn’t just augmenting expertise, it may be eroding it. From physicians to developers, evidence shows short-term gains can come at the cost of long-term competence. The real risk isn’t dependency, it’s losing the ability to function without AI.

When we adopt new technologies, the promise is always the same: tools will make us better at what we do. But mounting evidence suggests we may be trading long-term competence for short-term convenience. From doctors to developers to students, a troubling pattern is emerging, AI assistance isn't just helping us perform tasks, it's fundamentally degrading our ability to perform them independently.

When Doctors Lose Their Eye

Researchers conducting the ACCEPT trial (Artificial Intelligence in Colonoscopy for Cancer Prevention) made a surprising observation. After introducing AI tools to help detect polyps during colonoscopies, they noticed something odd: when the same doctors performed procedures without AI assistance, they actually got worse at finding precancerous adenomas, growths that colonoscopies are specifically designed to catch.

The numbers are stark. Before AI was introduced, doctors had an adenoma detection rate (ADR) of 28. 4%. After several months of working with AI on alternating cases, their performance on non-AI cases dropped to just 22. 4%, a statistically significant decline of 6 percentage points.

To put this in perspective: if you walked into one of these endoscopy centers for a routine colonoscopy after they'd started using AI, but happened to be scheduled on a day when the AI wasn't active, you'd be about 21% less likely to have dangerous polyps detected compared to just a few months earlier.

A Pattern Emerges

The phenomenon isn't new, we've been here before. Consider what happened with calculators. When they became commonplace in the 1970s and 80s, we didn't just lose the ability to perform long division, we lost something deeper. The mental arithmetic that previous generations performed daily, carrying numbers in their heads, estimating reasonably, developing number sense, wasn't just busy work. It was cognitive exercise that built foundational mathematical intuition.

Research has shown that students who rely heavily on calculators struggle more with abstract mathematical concepts. They have weaker mental estimation skills. They're less able to spot obviously wrong answers because they haven't internalized what reasonable numbers look like. The calculator didn't just replace a skill, it prevented the development of the cognitive scaffolding that makes higher-level mathematics accessible.

When GPS navigation arrived decades later, we saw the same pattern. Our sense of direction atrophied. But more significantly, we lost the spatial reasoning that comes from mentally mapping our environment. Studies show that people who navigate with GPS have reduced activity in the hippocampus, the part of the brain responsible for spatial memory and navigation. The skill doesn't just fade from disuse, the brain literally reorganizes itself around the absence of that cognitive demand.

We've always traded skills for convenience, but AI represents something different in scale and scope. It's not just replacing mechanical calculations or simple navigation; it's stepping in for complex cognitive work that defines expertise across entire professions.

And now, research from multiple fields is revealing just how quickly and dramatically AI can degrade human capability.

The Skill Fade Phenomenon: It's Happening Everywhere

The colonoscopy findings aren't an isolated case. They're part of a broader pattern emerging across multiple domains as AI tools become ubiquitous.

Students Are Losing the Ability to Think

In a recent study, researchers used brain imaging to observe what happens when students write essays with ChatGPT assistance versus writing independently. The results were striking: participants who used ChatGPT exhibited significantly less brain activity during the writing task.

But the damage went beyond neural engagement. When later asked to recall what they had written, the AI-assisted students performed far worse. They also reported feeling less ownership over their work, a psychological distancing that suggests something fundamental was lost in the process. They hadn't just offloaded the mechanics of writing; they'd outsourced the thinking itself.

Developers Are Forgetting How to Code

Perhaps most concerning is what's happening to software developers. Anthropic conducted a randomized controlled trial examining how quickly developers learned a new Python library with and without AI assistance, and whether AI impacted their understanding of the code they'd written.

The findings were definitive: developers who used AI assistance scored 17% lower on a quiz covering concepts they'd used just minutes before, the equivalent of nearly two letter grades. They completed tasks slightly faster with AI, but this speed advantage wasn't statistically significant. What was significant was the comprehension gap.

The implications are sobering. These weren't novices, they were experienced developers. And the quiz wasn't administered days later; it tested knowledge of code they had just written. AI didn't just reduce their learning; it essentially prevented it from happening at all.

This aligns with a well-documented psychological phenomenon: when we rely on tools to perform tasks, our ability to perform those tasks independently can deteriorate. Pilots who depend heavily on autopilot may become less proficient at manual flying. Drivers accustomed to GPS navigation may lose their sense of direction. The pattern is consistent across technologies, but AI's rapid adoption and broad application make the stakes higher than ever.

The Dependence Trap

Does this mean AI is inherently harmful? Not necessarily. The colonoscopy study didn't compare AI-assisted detection rates to pre-AI baseline rates. The ChatGPT study didn't measure whether AI-assisted essays were better quality. The coding study didn't assess whether AI-written code was more reliable.

It's entirely possible that AI produces better outcomes when it's actually in use. But these studies reveal a more insidious problem: we're creating systems where humans become dependent on AI to perform at even their previous baseline level.

Consider the implications across each domain:

In medicine: If doctors' skills degrade when they don't use AI, perhaps AI should be used universally rather than intermittently. But what happens when the technology fails, isn't available, or makes an error? Who can catch it?

In education: If students don't engage deeply with material when using AI, they're not actually learning, they're producing outputs. What happens in interviews, exams, or real-world situations where AI isn't available?

In software development: If developers don't understand the code they write with AI assistance, who maintains it? Who debugs it? Who ensures it's secure? The code exists, but the expertise to support it doesn't.

The pattern is clear: short-term productivity gains may be creating long-term competence deficits.

The Training Crisis: Learning to Think Without Thinking

These findings should concern anyone thinking about the future of work and expertise, but the implications for medical training are particularly devastating. Medicine has always been learned through a combination of observation, practice, and crucially, mistakes. The traditional pathway, from medical school through internship, residency, and continuing medical education (CME), is built on the assumption that doctors develop expertise by doing the cognitive work themselves.

The End of Learning by Doing

Consider how a gastroenterology fellow learns to detect polyps. They perform hundreds of colonoscopies under supervision, training their eye to recognize subtle tissue variations, unusual colors, tiny irregularities that might indicate disease. Each procedure builds pattern recognition. Each missed polyp that an attending physician catches becomes a learning moment, burned into memory. The fellow's brain literally rewires itself through repetition and error correction.

But what happens when AI detects the polyps instead? The fellow still performs the procedure, but their brain isn't doing the hard work of visual discrimination. The AI highlights the suspicious areas. The cognitive load is offloaded. The neural pathways that would have developed through struggle and attention never form.

The ACCEPT trial showed that experienced endoscopists, doctors who had already developed expertise, saw their skills degrade after just three months of intermittent AI exposure. Now imagine a trainee who never develops those skills in the first place. What does their baseline even look like?

Medical Education's Fundamental Assumption

Medical training is predicated on graduated responsibility. Medical students observe, interns assist, residents perform under supervision, and attendings take full responsibility. Each level involves progressively less oversight as competence develops. But this model assumes that the cognitive work of diagnosis and decision-making is happening inside the trainee's head.

If AI is making the diagnostic observations, suggesting the differential diagnoses, flagging the abnormalities, what exactly is the trainee learning? They're learning to operate the AI. They're learning to trust its outputs. They're learning a workflow. But are they learning medicine?

The Mistake Paradox

Perhaps most critically, medicine is learned through mistakes. A resident misses a subtle finding on an X-ray, the attending catches it, and the resident never forgets to look for that pattern again. A surgical trainee makes a technical error, experiences the consequence, and develops the muscle memory to avoid it. Error correction is one of the most powerful learning mechanisms we have.

But AI doesn't let you make certain mistakes. If the AI always catches the missed polyp, the endoscopy fellow never experiences the cognitive dissonance of "I looked at that area and saw nothing wrong, but there was a polyp there. " That dissonance drives learning. It forces the brain to recalibrate its pattern recognition.

When AI prevents mistakes, it also prevents one of the primary mechanisms through which expertise develops.

Residency in the Age of AI

Imagine a gastroenterology residency program in 2034. All colonoscopies are performed with AI assistance because anything else would be considered substandard care. The residents complete their required case numbers. They achieve technical proficiency in scope manipulation. They can interpret AI outputs. But when they finish training and take their boards, can they actually detect polyps on their own?

Do we even care, if AI will always be available?

But what happens when:

- The AI system goes down during a procedure

- The AI produces a false negative and the doctor doesn't have the baseline skill to catch it

- The doctor works in a rural hospital that doesn't have the latest AI tools

- A new polyp morphology emerges that the AI wasn't trained to recognize

Without foundational skills, doctors become entirely dependent on AI functioning perfectly, being available universally, and never encountering novel situations.

Continuing Medical Education's Obsolescence

CME currently exists to keep practicing physicians updated on new techniques, new evidence, and new standards of care. But how do you maintain skills that are being actively degraded by daily practice with AI?

Traditional CME might involve reviewing cases, practicing on simulators, or discussing diagnostic challenges with peers. But if doctors spend 95% of their time working with AI assistance, occasional CME sessions without AI won't be enough to maintain skills. It's like asking someone to maintain their manual driving skills when they use autopilot for 95% of their commute.

We may need entirely new models: regular "unplugged" practice sessions, mandatory non-AI case requirements, competency testing without AI assistance. But these solutions fight against the reality that AI-assisted performance is genuinely better in many cases. Why would we deliberately use inferior approaches for training when patient outcomes matter?

The Generational Divide

We're about to create a stark divide in medical expertise. Physicians trained before widespread AI integration developed baseline skills through traditional methods. They may experience degradation, as the ACCEPT trial showed, but they have something to degrade from.

Physicians trained primarily with AI assistance may never develop those baseline skills at all. They'll be native to an AI-augmented practice environment, which could be fine, as long as that environment never changes and AI never fails.

The question isn't whether AI makes current practice better. It often does. The question is whether we're creating the next generation of physicians who can't actually practice medicine without it.

Beyond Medicine

While medicine provides the starkest example because of its life-or-death stakes and formalized training pathway, the same dynamics apply across professions:

Law students learning to research with AI may never develop the ability to construct legal arguments from first principles. Engineering students using AI-assisted design may never internalize the physics that constrain their designs. Accounting students using AI for analysis may never develop the intuition for what numbers should look like.

Every profession built on observation, practice, and learning from mistakes faces the same fundamental challenge: AI is optimizing for today's performance while potentially eliminating tomorrow's expertise.

The most critical question may be this: For which tasks do we want to maintain human expertise, and for which are we willing to accept complete dependence on AI? Because the evidence suggests we're likely to end up with one or the other, not both.

The Bottom Line

The promise of AI remains enormous. These tools can help us work faster, catch errors, and tackle complex problems. But the accumulating research reveals a darker side: AI doesn't just assist us, it can actively prevent us from developing and maintaining the expertise needed to function without it.

We're creating a world where doctors can't detect polyps, students can't write coherent arguments, and developers can't understand their own code unless AI is actively helping them. And we're doing it remarkably quickly.

This isn't a call to abandon AI. It's a call for honest reckoning with what we're trading away. We need implementation strategies that acknowledge how AI changes human cognition and behavior. We need educational systems that ensure people develop foundational skills before becoming dependent on AI. We need monitoring frameworks that track not just what we can do with AI, but what we retain the ability to do without it.

The goal shouldn't be choosing between human expertise and artificial intelligence. It should be figuring out how to integrate them without creating generations of people who can't function when the technology isn't there, or worse, who can't even recognize when it's making mistakes.

Because right now, we're not just becoming dependent on AI. We're becoming incapable without it. And that's a very different, and far more dangerous, outcome.

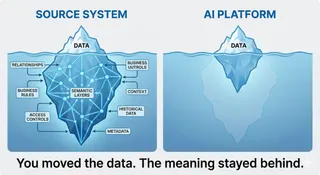

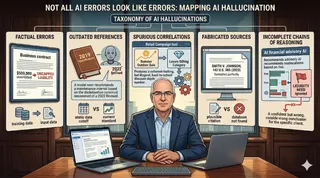

This article synthesizes findings from multiple studies: the ACCEPT trial examining AI in colonoscopy, research on brain activity during AI-assisted writing, and Anthropic's study on AI-assisted coding. Together, they reveal a consistent pattern of skill degradation across professional domains as AI assistance becomes commonplace.