Not Every Problem Deserves an Agent

Most agentic AI failures happen before a line of code is written. The use case was wrong. The cost model was missing. Two frameworks most teams skip, and pay for later.

Most agentic AI failures happen before a line of code is written. The use case was wrong. The cost model was missing. Two frameworks most teams skip, and pay for later.

A developer, an accountant, a graphic designer, a film director, a composer, and a product manager all use the exact same interface to communicate with AI: a text box. That has never been true of mature technology. It will not stay true for this one.

The people most affected by your AI product are almost never in the room when you build it. They don't appear in your analytics. They weren't in the meeting when the roadmap was approved. They will never give you feedback. You owe them something anyway

AI compresses knowledge. It does not compress experience. The junior PM in your next interview has access to the same tools you do. What separates you now is judgment. There is no course for that. There never was.

Healthcare AI job postings keep asking for unicorns: 14 years of experience in a field that is three years old, deep AI expertise, deep clinical expertise, deep product expertise. That person does not exist. And even if they did, they would not fix the real problem.

In healthcare, AI can already predict. The hard problem is reasoning, and reasoning depends entirely on context. The physician who takes a complete history, examines carefully, and aggregates everything from the EHR to the nursing home fax is not being thorough. They are building the product.

People don't buy a drill. They buy a hole. AI is the drill. Every major technology wave produces the same confusion. SQL became infrastructure. Java became infrastructure. Cloud became a checkbox. AI is doing the same thing. We are in the expensive middle of that arc right now.

Twenty years of customer interviews, workshops, and journey maps. Then agentic AI arrived, and every framework I trusted turned out to share one assumption I had stopped noticing: that the human is always smarter than the tool. Here's what breaks when that stops being true.

For 20 years, I translated between customers and engineers. Now I architect decision systems with AI. But here's the catch: you're designing for two customers: humans and their AI agents. Design for one, forget the other, and it fails.

MVP was meant to test ideas, not become architecture. Now, we’ve started stacking prototypes into production systems nobody designed as systems. When one layer fails, the whole structure drifts silently. In medicine, that’s not technical debt. It’s patient risk.

My first AI-assisted article was polished, accurate, and completely unrecognizable as mine. The problem wasn’t the model. It was that I never taught it how I think. If you want AI to sound like you, you have to externalize your reasoning first.

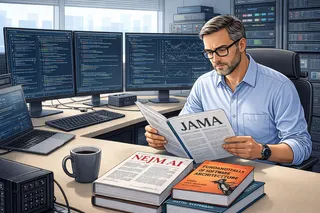

In healthcare AI, policy shifts now appear first in journals like JAMA and NEJM, then quietly become grant conditions and RFP requirements. Many tech teams miss this signal. The winners will be those who translate medical literature into architecture before it becomes mandatory.