AI Evals: What the Checkmarks Actually Prove

Your team ran evals. Every test passed. The results look like the QA matrix you've signed off on a hundred times. They're not. Here's what AI evals actually catch, and where they go silent.

Your team ran evals. Every test passed. The results look like the QA matrix you've signed off on a hundred times. They're not. Here's what AI evals actually catch, and where they go silent.

Most agentic AI failures happen before a line of code is written. The use case was wrong. The cost model was missing. Two frameworks most teams skip, and pay for later.

Designing agentic systems is like teaching a class of toddlers to behave. You set the rules. You arrange the room. But you cannot test every move. The design problem changed. Most enterprise teams haven't noticed yet.

Agentic AI is not a smarter chatbot. It’s a system that can hold the full clinical picture across fragmented data, coordinate actions, and escalate when needed. But its value depends on data, interfaces, and accountability designed right from the start.

Twenty years of customer interviews, workshops, and journey maps. Then agentic AI arrived, and every framework I trusted turned out to share one assumption I had stopped noticing: that the human is always smarter than the tool. Here's what breaks when that stops being true.

For 20 years, I translated between customers and engineers. Now I architect decision systems with AI. But here's the catch: you're designing for two customers: humans and their AI agents. Design for one, forget the other, and it fails.

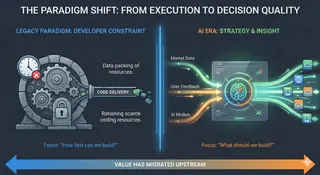

The bottleneck in tech is no longer writing code, it’s deciding what to build. As AI collapses development time, value shifts upstream to product judgment. In healthcare especially, models work, but projects fail without real product thinking. The new question isn’t can we build it, but should we.