Nobody Taught Medical Students to Wait for Clean Data

The physician's job has always been data synthesis. Fragments, approximations, family filters, descriptions of pill geometry. Consumer health AI was built for a different patient. One that has never walked through a clinic door.

Nobody taught medical students to wait for clean data.

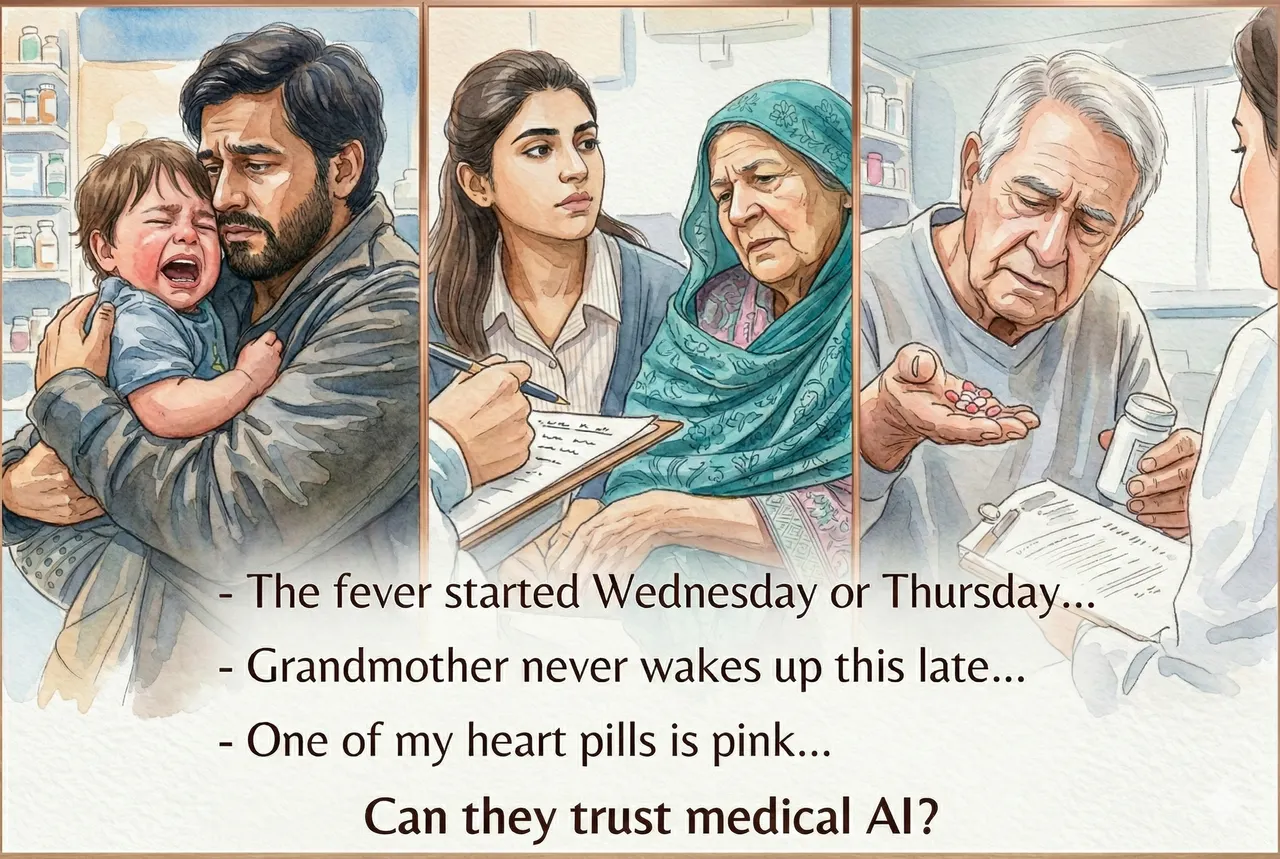

A father walks into the ER at 11pm with a crying, febrile toddler. His wife stayed home with a newborn. He has no idea about developmental milestones, can't say exactly when the fever started, doesn't know what's normal for this child at this age. He's running on no sleep and panic.

A young woman sits in a clinic chair next to her 84-year-old grandmother who has been unwell for several days and only speaks a dialect of Urdu. The granddaughter is translating. The grandmother is not coherent. The translation is approximate.

An elderly gentleman with chest pain is trying to remember his medications. "Two white round pills for my blood, one round yellow for my heart, an elongated pink for my stomach, two big white ones for my sugar." That is his medication list.

This is the real environment in which physicians work. Not structured intake forms. Not complete histories. Fragments, approximations, family filters, and descriptions of pill geometry.

And a skilled physician navigates every one of these with a practiced methodology:

What is the risk level, before I have all the information? What is the most probable differential diagnosis given what I do have? What is the minimum set of questions I need answered to confirm or rule it out? What other data sources can I reach: previous visits, pharmacy records, a paper discharge letter in the patient's bag, a phone call to a family member who wasn't there?

The physician's job has always been data synthesis. Aggregating from imperfect sources. Filling gaps. Helping a patient remember what they forgot. Reading a prescription label photographed on a phone.

Consumer health AI was built on a different assumption: that users would arrive with complete, coherent, structured information. That patients would know what questions to ask and how to answer them accurately.

That assumption has never described a single real patient encounter in the history of medicine.

Two recent Nature studies found that healthcare AI fails precisely in the conditions where real patients show up: fragmented, stressed, incomplete. Both measured the model. Neither asked whether the system had any methodology for data synthesis at all.

The physician has that methodology. It took years to build.

We built a great model. We forgot the patient.