Governance: The Word Everyone Uses and Nobody Agrees On

Everyone talks about governance. Nobody agrees on what it means. Data governance, AI governance, master data governance: they're not separate programs. They're one spectrum. And most enterprises already have 70% of what they need. They just can't see how the pieces connect.

Why data governance, AI governance, and master data governance are all part of the same spectrum, and why that matters more than ever.

We're in a strange moment for governance.

The EU AI Act is moving into enforcement. Data privacy regulations are fragmenting across jurisdictions. Enterprises are racing to deploy generative AI while their compliance teams scramble to figure out what "responsible use" even means in practice.

And in the middle of all this, everyone keeps using the word "governance" as if we all agree on what it means.

We don't.

If I put your data team, your AI team, and your SAP team in a room and asked them all to define "governance," I'd get at least three different answers. Your data team would talk about quality, lineage, and stewardship. Your AI team would talk about fairness, model risk, and responsible use. Your SAP team would talk about master data workflows, golden records, and change approvals.

They're all right. And they're all incomplete.

Governance isn't a single discipline. It's a spectrum. And the organizations that treat it as one fragmented program after another, each with its own framework, committee, and slide deck, are the ones that keep reinventing the wheel.

Here's the position I want to stake out: the governance problem isn't a capability gap. It's a visibility gap. Most enterprises already have 70% of what they need. They just can't see how the pieces connect.

Let me break this down.

Data Governance and AI Governance: Two Questions, One Continuum

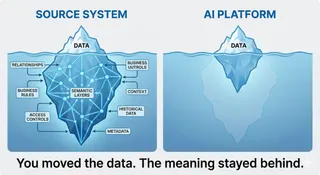

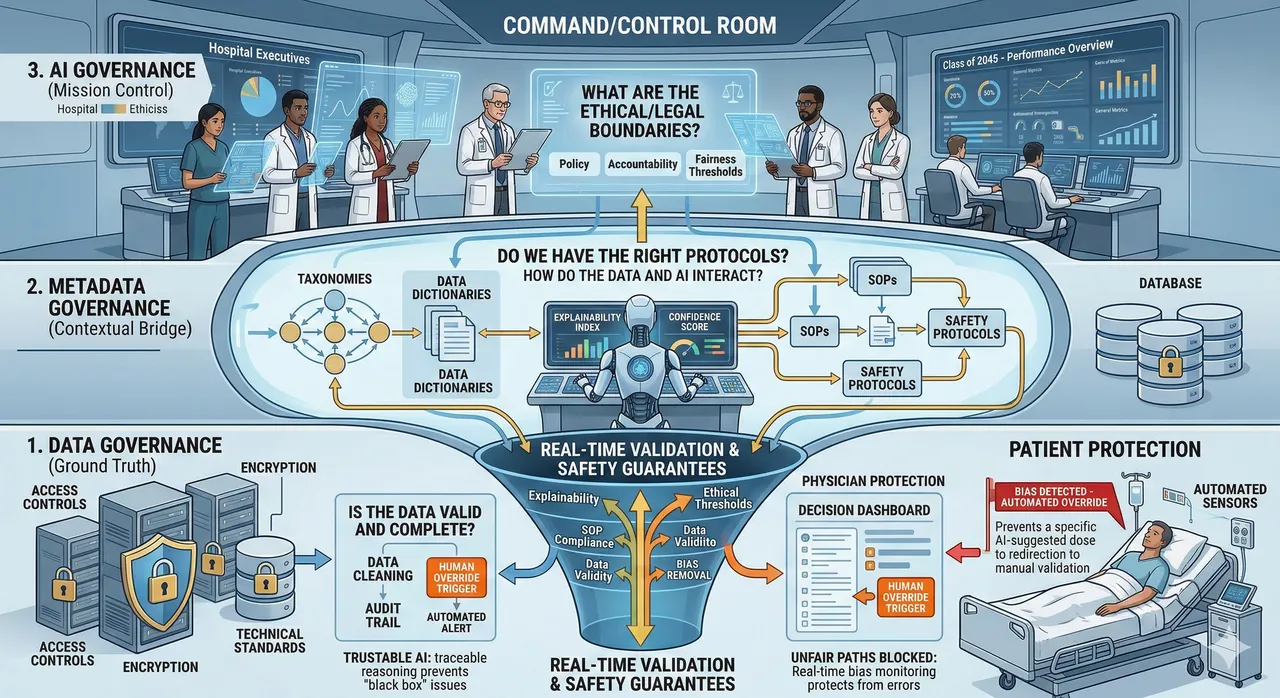

Data governance is the most established governance discipline. It answers a foundational question: Can we trust our data?

That means defining ownership, measuring quality, controlling access, and tracking how data flows across systems. Most mature organizations have the building blocks: stewards, quality rules, audit trails, access controls.

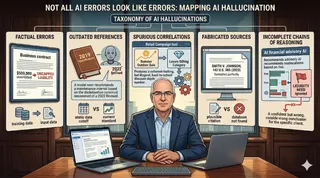

AI governance picks up where data governance stops, but it asks a fundamentally different question: Can we trust what the system does with the data?

This is the shift from managing information to managing decisions. When an AI model recommends a loan denial, flags a medical risk, or auto-generates customer communications, you're no longer just worried about whether the input data was accurate. You're worried about whether the outcome is fair, explainable, and safe.

AI governance introduces concerns that data governance was never designed to handle: bias and fairness testing, human-in-the-loop design, drift monitoring, output validation, and incident response. It also shifts the accountability chain. In data governance, you have data owners and stewards. In AI governance, you need system owners, responsible human decision-makers, and oversight authorities. The accountability surface grows because the impact surface grows.

But here's what most organizations get wrong: they treat these as separate programs. New frameworks, new committees, new everything. The smarter approach is to recognize that most data governance capabilities are already AI governance capabilities, just applied to a different lifecycle stage.

Data quality management builds trust in model performance. Lineage tracking helps surface bias. Access controls define boundaries for ethical use. Auditability enables defensible decision-making.

Same muscles. Different emphasis: inputs versus outputs.

And with generative AI expanding the surface area of what AI systems produce, from content to code to customer interactions, the urgency to connect these two disciplines has never been higher. The organizations that figured out data governance a decade ago have a real head start here, if they're willing to extend what they've built rather than start over.

Master Data Governance: The Operational Layer Most Governance Conversations Miss

This is where the conversation gets practical, and where I want to challenge a blind spot in how governance gets discussed.

Whether you run SAP Master Data Governance (MDG) or another platform for managing core data objects, the principle is the same: master data governance is the operational execution layer. It's where governance policies become enforceable processes.

In practice, this means structured workflows for creating, changing, and consolidating the master data that runs your business: customers, vendors, materials, financial hierarchies, business partners. It means validation rules, duplicate detection, approval chains, and quality checks that happen at the point of master data creation and change, embedded in the processes your business runs on every day.

This isn't a policy document sitting in SharePoint. It's governance you can touch.

Take SAP MDG as an example. When someone wants to create a new vendor record, MDG defines who requests it, who reviews it, what quality checks it passes through, and who approves it before it hits your production system. After a merger, MDG provides the framework to match, merge, and harmonize records across systems at scale.

And here's the connection most people miss: master data governance is foundational AI governance that nobody labels as such.

A duplicate vendor record isn't just an ERP nuisance. It's a data quality issue that propagates into your analytics layer, your spend analysis models, and eventually your AI-driven procurement recommendations. A miscategorized material doesn't just mess up reporting. It trains your demand forecasting model on flawed assumptions.

When master data governance enforces a validation rule or rejects a poorly formed record, it's protecting the integrity of the data that AI systems will eventually consume. It's doing governance work before any model gets involved.

I've seen this disconnect firsthand in enterprise environments. A company will spend millions implementing master data governance with robust workflows, data quality rules, and stewardship models. Then a separate team will kick off an AI governance initiative and start from a blank whiteboard, completely unaware that the operational governance backbone they need is already running. The disconnect is organizational, not technical. And bridging it is one of the highest-value, lowest-cost governance improvements most enterprises can make.

The Broader Spectrum

Data governance, AI governance, and master data governance are the three pillars I've focused on here, but they're not the only points on the spectrum.

Model Risk Management (MRM) is worth noting. Financial institutions have been governing the lifecycle of analytical models for years under regulatory guidance like SR 11-7: validation, documentation, performance monitoring, retirement. They didn't wait for a framework to tell them models need governance. They learned it through financial crises and built rigorous practices as a result. There's a lot the broader market can learn from how banks approach model oversight.

Regulatory and compliance governance cuts across all of these. With the EU AI Act, evolving global privacy regulations, and sector-specific requirements tightening, the ability to track, interpret, and operationalize regulatory change is becoming its own discipline. It doesn't replace data or AI governance; it wraps around them and creates the external pressure that forces organizations to take governance seriously.

The point is: governance is a family of interconnected disciplines, all trying to answer variations of the same core questions. Who is accountable? What are the rules? How do we enforce them? And what happens when something goes wrong?

So What Should You Actually Do?

If you're trying to figure out your governance story, here's my practical advice.

Stop starting from scratch. Map what you already have. Your data stewardship roles, your master data workflows, your compliance controls, your access management, these are governance capabilities. Label them as such and build from there.

Connect the layers. Data governance feeds AI governance. Master data governance operationalizes data governance. MRM provides patterns for model oversight. Regulatory governance sets the boundaries. Draw the lines between them instead of running parallel programs that never talk to each other.

Match rigor to risk. Not every AI use case needs the same level of governance. A recommendation engine for internal content is different from an automated credit decisioning model. Scale your controls to the stakes involved.

Assign accountability early. The hardest part of governance isn't the technology or the frameworks. It's getting people to own outcomes. Define who is responsible for data quality, model performance, and decision oversight before you launch your next AI initiative, not after something breaks.

Start with one proof of concept. Governance doesn't need to be perfect on day one. Apply it to a real use case, learn what works, and iterate. The worst governance programs are the ones that spend two years designing a framework nobody uses. The best ones start small, prove value, and earn their mandate through results.

The Bottom Line

Governance is elusive because we keep treating it as a collection of separate problems. Data governance asks, "Is the data correct?" AI governance asks, "Is the outcome acceptable?" Master data governance asks, "Is the record clean and approved?" MRM asks, "Is the model still performing?" Compliance asks, "Are we following the rules?"

These aren't competing questions. They're complementary layers of the same organizational capability: making trustworthy, accountable, defensible decisions at scale.

The organizations that get this right won't be the ones with the fanciest frameworks. They'll be the ones that see governance as a single connected spectrum, recognize that most of the pieces are already in place, and have the organizational clarity to wire them together.

Governance isn't a framework problem. It's a visibility problem. And the enterprises that solve for visibility will define what trustworthy AI looks like for the next decade.