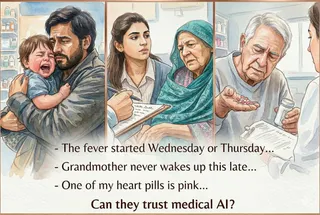

The Blueprint Was Already There

This week I read three papers that made me happy. JAMA. NEJM. Nature Medicine. All randomized trials. All showing AI outperforming standard care. Then I read the methodology. None of them LLMs. The AI winning in top journals in 2026 was built before the hype cycle. The blueprint was always there.