AI Evals: What the Checkmarks Actually Prove

Your team ran evals. Every test passed. The results look like the QA matrix you've signed off on a hundred times. They're not. Here's what AI evals actually catch, and where they go silent.

Dr. Friedman is a physician turned product manager with 20+ years building enterprise software and leading digital transformation. He writes about the intersection of technology, human behavior, and healthcare, where solutions directly impact lives.

Your team ran evals. Every test passed. The results look like the QA matrix you've signed off on a hundred times. They're not. Here's what AI evals actually catch, and where they go silent.

Most agentic AI failures happen before a line of code is written. The use case was wrong. The cost model was missing. Two frameworks most teams skip, and pay for later.

Designing agentic systems is like teaching a class of toddlers to behave. You set the rules. You arrange the room. But you cannot test every move. The design problem changed. Most enterprise teams haven't noticed yet.

I don't remember much from school. My memory starts the day I entered clinical training. Medicine is learned by burning encounters into memory. AI is very good at removing that weight. The weight was the learning.

The physician was the gatekeeper. The hospital was the hub. AI changed both. Now Amazon, Google, and OpenAI are racing to own what comes next. The patient relationship is the prize, and the bidding has started.

Patients aren't waiting for the healthcare system to catch up. They have wearables, direct-access labs, referral-free MRIs, and AI interpreting all of it. The parallel system is already running."

She arrives with a plan her AI already helped her build. The physician now has two choices: become a trusted continuum who adds what AI cannot, or become a friction point blocking a plan she already made. Only one of those sustains the relationship.

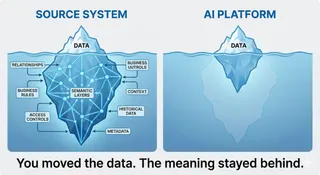

Enterprise AI has a structural catch-22: context lives where you cannot run agents, and compute lives where context does not exist. Move the data and you lose the meaning. That gap is why most deployments produce outputs that are technically impressive and operationally thin.

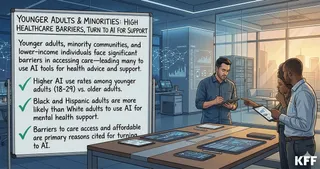

1 in 3 Americans uses AI for health advice. The heaviest users are uninsured, low-income adults who cannot access a doctor. The AI they are relying on was built on data from patients who look nothing like them. That is not an equity talking point. It is a product failure.

A developer, an accountant, a graphic designer, a film director, a composer, and a product manager all use the exact same interface to communicate with AI: a text box. That has never been true of mature technology. It will not stay true for this one.

Agentic AI is not a smarter chatbot. It’s a system that can hold the full clinical picture across fragmented data, coordinate actions, and escalate when needed. But its value depends on data, interfaces, and accountability designed right from the start.

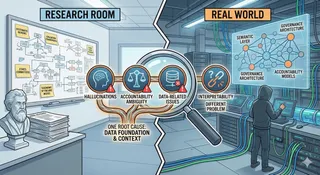

New peer-reviewed research named 4 critical challenges blocking healthcare AI deployment. The research got the problems right. But three of those four share one root cause nobody is building toward. One doesn't. Here's the response from the real world.